By: Dr. Navin Budhiraja, Vianai Systems Chief Technology Officer & Head of VIANOPS Platform

As more and more companies rely on machine learning models as core to their business processes, the need to monitor and manage the performance of these models becomes increasingly important. This need has steadily increased over the last few years with the natural evolution of technology adoption within existing organizations.

What is new is the rapid acceleration of businesses that rely on ML models as core to the entire business model, i.e., it is the business model. Not just models running in support of various processes, but models that run the business, delivering the goods or services themselves. Companies such as financial services firms, payment processing firms, online retailers, video and social media platforms, gaming platforms and others.

Layering onto this picture of a new kind of high-performance ML context is the external environment where we are seeing a radical acceleration of Large Language Models (LLMs) and the desire for organizations to bring advanced AI techniques quickly into their day-to-day business units, directly to users.

With these rapid accelerations, we are also seeing a generational shift in the need for ML model monitoring capabilities to ensure these models are reliable, trustworthy and high-performing – and to do this at an immense scale while keeping costs down.

Where Does the Complexity Come From?

ML model monitoring is not a new concept. Paired with observability, they have both been in play for some time. However, the need for model supervision and retraining increases as companies’ MLOps get more complicated with complex models running at scale. Monitoring tools have been around but were developed in a much different context. Just a few years ago, we didn’t have the proliferation of machine learning models running in organizations we have today. Even the word “model” was primarily associated with rules-based models and other non-ML models that an organization might use for financial forecasting, supply chain predictions and other processes.

Therefore, tools developed at that time were for organizations that needed basic monitoring capabilities on a relatively small scale. These were small-scale because of the low number of models with features at this time, often only in the tens. In addition, the models were interpretable and explainable and hence did not need constant monitoring as there was less risk of drift. Many companies at this time were just beginning to bring on data scientists to build models. Running models in production, even at a low scale, seemed quite far off into the future.

The reality is that the last few years have brought a paradigm shift. Companies have moved beyond experimentation to become more sophisticated in machine learning. With this paradigm shift toward the pervasive use of ML models, we have also seen the size, complexity, and risk of these models dramatically increase – leading to the urgent need for a new kind of ML model monitoring solution, one that can handle the massive scale challenge and power the agility that data science and MLOps teams need.

The complexity is in the details. Complexity doesn’t necessarily come from the number of models running in production. It can come from very few models that each on their own are highly complex or massive in size and scope.

The complexity is also in agility. With less complex models, teams can do scheduled and infrequent runs to observe data and make necessary changes. Less complex models are easier to drill into alerts, as there are fewer layers to sort through.

Today the world looks very different. Many companies today run ML models with 100s or 1000s of features, millions or even billions of data points that need to be analyzed, tens of thousands of inferences per second, and so on.

Traditional tools are simply not designed for this kind of scale and complexity. Even if they were able to scale to larger and more complex models, costs became prohibitive.

What Exactly is Monitoring and Observability at Massive Scale?

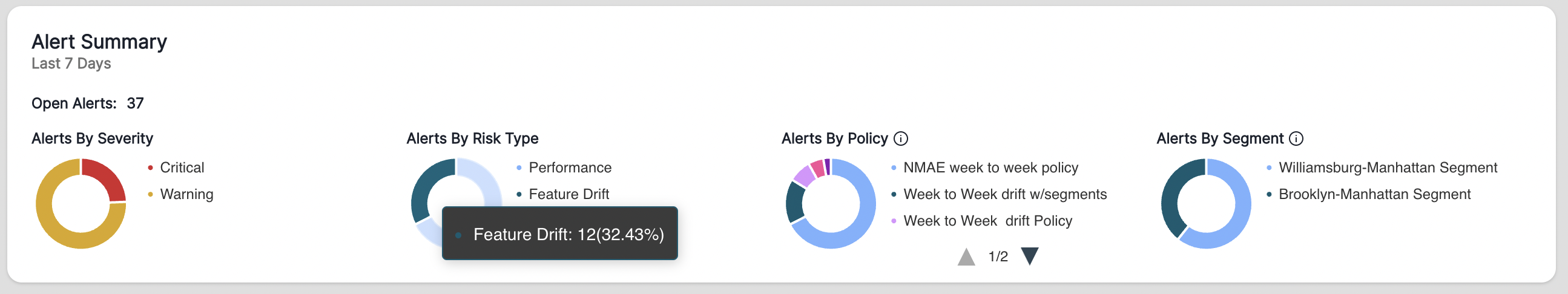

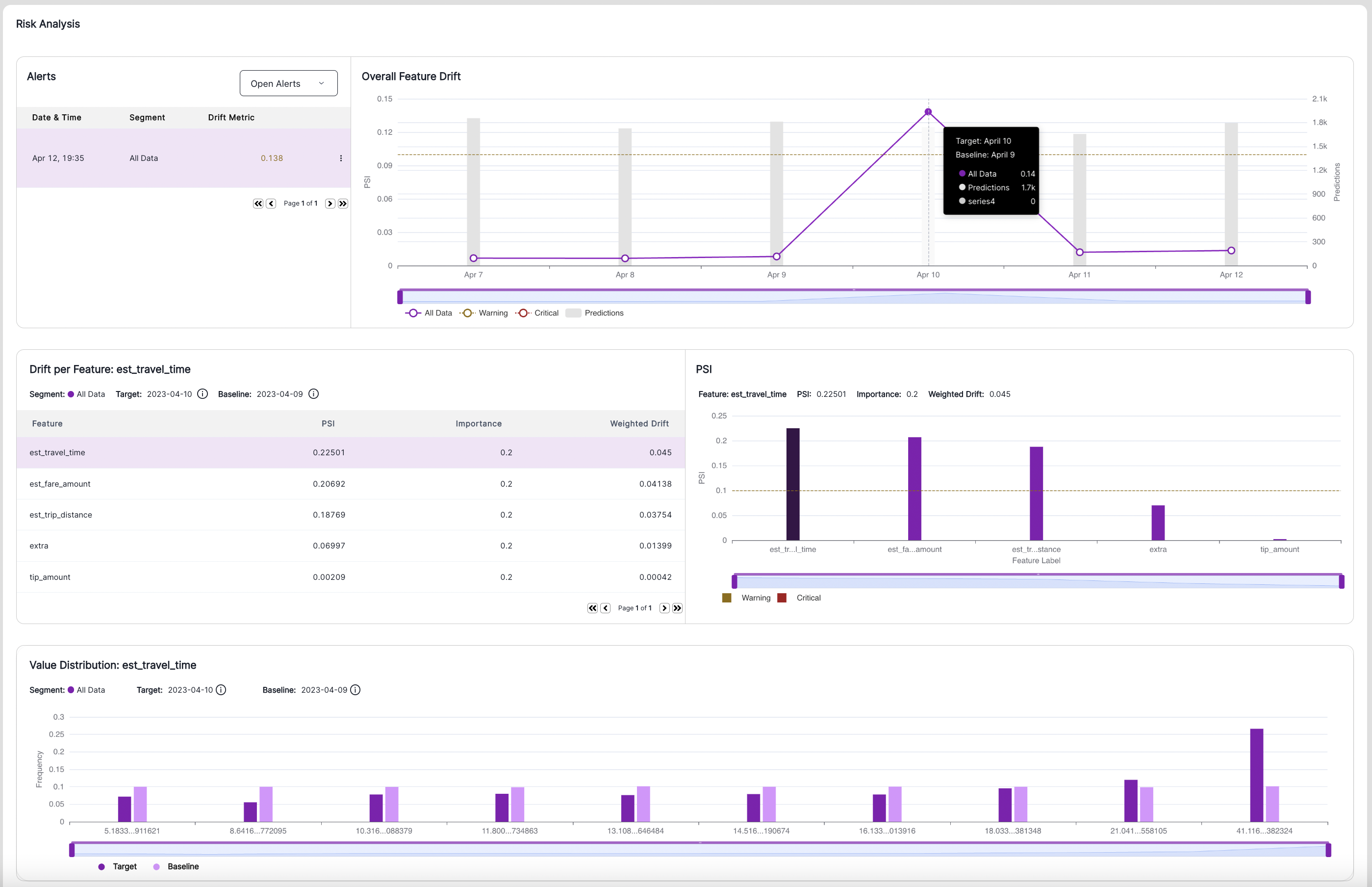

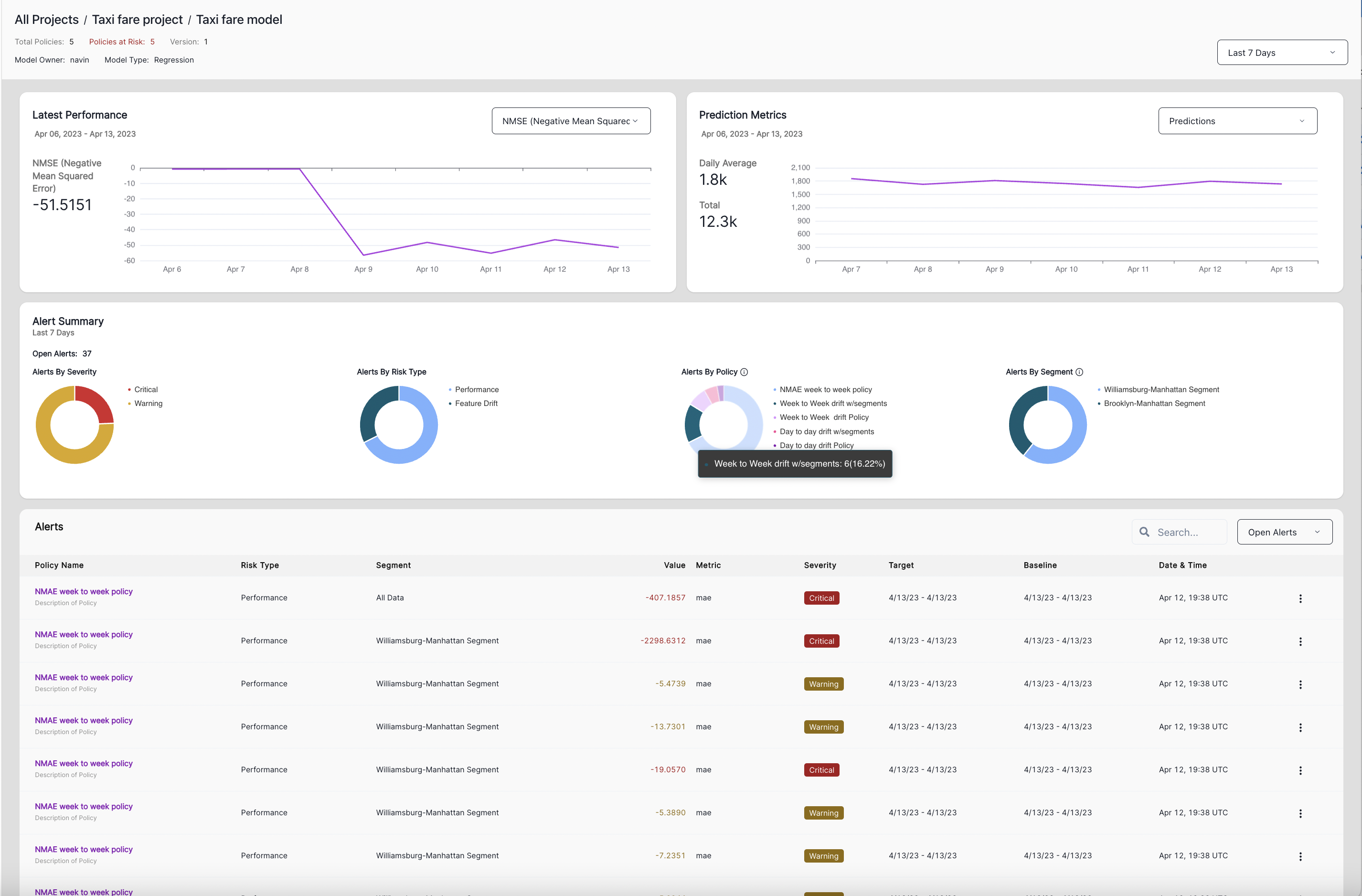

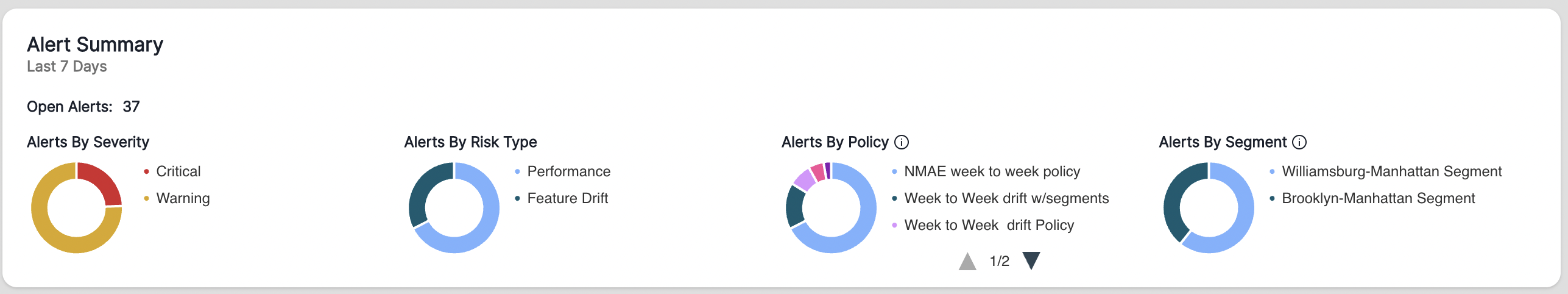

High-Performance MLOps teams need solutions that can get into the granular details of model performance, on the fly, in real time, to understand the critical problems that jeopardize the model’s reliability – and to do this on the largest and most complex models we can think of, at minimal cost. Monitoring at scale means not only watching for behavior changes but being able to look into the data from many dimensions – day to day, week to week, month to month, at the desired sensitivity level, into segments and subsegments, and in the context of real-world dynamics and business needs. High-scale monitoring also means drilling into large numbers of features through the noise of alerts to identify drift that matters. Even significant drift may not matter as the impact doesn’t meet the sensitivity thresholds. In contrast, other drift may appear smaller or less noticeable at the surface level but will harm the business if it is not corrected.High-scale ML model monitoring is not a scheduled “batch job” approach. It’s not the BI of yesteryear. This is real-time, on-the-fly drill-down analytics on input data and output data on extremely high volumes of data, e.g., billions of data points.The ability to scale at very low costs is essential. Other companies have scaling capabilities, but usually at much higher costs. VIANOPS optimizes our tools to reduce the overall cost of our models with speed 1,000 to 10,000 times faster than some popular, large-scale data processing tools at the same cost. This leads to more tangible business outcomes for those utilizing our tools and makes them more accessible.

VIANOPS High-Performance ML Model Monitoring

In architecting the VIANOPS platform, we approached it with a unique set of design principles:-

- Massive reach and depth in monitoring the most complex, largest, layered, high-volume models we could imagine.

- Unparalleled flexibility for the user to monitor infinite possibilities, across segments and subsegments, without limitation.

- Real-time, on-the-fly power to drill into data to see what is happening. Anytime; as-needed; ad-hoc.

- Unmatched user experience to transform the frustration of data science and MLOps teams working with today’s tools into user delight – and improve productivity.

- Extreme choice for companies to run on any cloud and integrate any data source.

- Optimized tools at scale with reduced costs.